The rapid rise of large language models (LLMs) and AI agents is redefining how software systems interact. APIs are no longer just passive data gateways, they are becoming active collaborators that AI agents rely on to reason, decide, and act. In this new era, building APIs without considering AI agents can severely limit scalability, automation, and innovation.

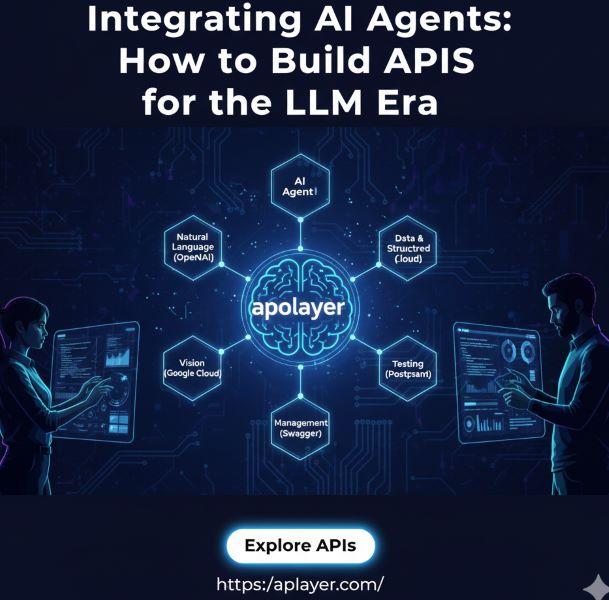

As one of the top API platforms in the world, apilayer is at the forefront of this transformation, enabling developers and businesses to integrate reliable, scalable, and AI-ready APIs into modern applications.

This article explores how to design and integrate APIs specifically for the LLM era, combining proven engineering principles with forward-looking strategies tailored for AI agents.

APIs in the Age of AI Agents

Traditional APIs were built for human developers writing deterministic code. AI agents, however, consume APIs very differently. They operate autonomously, chain multiple calls together, and interpret responses probabilistically. This shift requires a new mindset: APIs must be machine-intuitive, not just human-readable.

AI agents use APIs as tools, fetching data, validating inputs, triggering workflows, and making decisions in real time. Whether it’s a customer support agent validating phone numbers or a sales agent enriching leads, APIs are now part of the AI reasoning loop.

What Makes an API LLM-Ready?

An LLM-ready API goes beyond basic REST compliance. It is designed with clarity, predictability, and structure so that AI agents can reliably understand and use it.

Key characteristics include:

- Clear, descriptive endpoint naming

- Strong schema definitions using OpenAPI

- Consistent response formats

- Explicit error messages

- Predictable behavior under load

These principles align closely with modern API best practices for developers, but their importance multiplies when the consumer is an AI agent rather than a human.

Designing APIs as Tools for AI Agents

One of the biggest shifts in the LLM era is treating APIs as tools, not endpoints. AI agents benefit from APIs that explicitly describe what action they perform.

For example:

- Instead of /v1/check, use /validate-phone-number

- Instead of vague responses, return structured, labeled data

This approach improves reasoning accuracy and reduces hallucinations. AI models can better decide when and why to call an API, leading to more reliable automation.

Apilayer’s APIs exemplify this philosophy by offering clear, purpose-driven endpoints across data validation, enrichment, finance, geolocation, and more, making them ideal building blocks for AI-powered systems.

Schema-First API Design for AI

AI agents thrive on structure. Loose or inconsistent responses increase failure rates and misinterpretation. A schema-first approach ensures both humans and machines understand the API contract.

Best practices include:

- Use OpenAPI and JSON Schema

- Define required vs optional fields

- Standardize error responses

- Include example payloads

Well-defined schemas also enable better tool calling in LLMs and reduce friction during API integration. This is especially important when building autonomous workflows where multiple APIs interact without human intervention.

Authentication and Security for Autonomous Systems

Security becomes more complex when AI agents operate independently. Traditional API keys are often insufficient.

Modern AI-aware security strategies include:

- Scoped tokens with limited permissions

- Rate limits designed for agent loops

- Intent-based access control

- Validation layers to prevent prompt injection via API inputs

apilayer’s secure authentication mechanisms allow developers to safely expose APIs to AI agents without compromising data integrity or system stability.

Handling Context, State, and Memory

APIs are typically stateless, but AI agents rely heavily on context. The challenge is enabling context-aware workflows without breaking API simplicity.

Effective techniques include:

- Session identifiers for chained requests

- Context tokens passed explicitly

- External memory stores (databases or vector stores)

- APIs returning metadata that helps compress or summarize context

By keeping APIs stateless while supporting contextual workflows, developers can scale AI agent interactions without excessive complexity.

Performance and Reliability in Agent-Based Workflows

AI agents often retry requests, explore alternatives, and call APIs in rapid succession. APIs must be resilient to this behavior.

Key considerations:

- Idempotent endpoints to handle retries

- Intelligent caching strategies

- Graceful degradation when dependencies fail

- Observability tools to track agent usage patterns

These principles align closely with real-world API integration tips and examples, especially for systems operating at scale.

Documentation for Humans and Machines

Documentation is no longer just for developers. AI agents increasingly rely on machine-readable documentation to understand how to use APIs.

Modern documentation should include:

- OpenAPI specs

- Structured examples

- Clear descriptions of intent

- Consistent terminology

Some teams are even experimenting with documentation written in a prompt-friendly format, an emerging trend that aligns perfectly with LLM-based integrations.

apilayer excels here by providing clear, well-structured documentation that accelerates both human development and AI agent adoption.

Real-World Use Cases of AI Agent API Integration

AI-agent-driven API usage is already transforming industries:

- Customer support agents validating contact details in real time

- Sales automation enriching leads with location and company data

- Fraud detection systems combining multiple APIs for risk scoring

- Onboarding workflows verifying identity and data automatically

These use cases highlight why choosing a reliable API platform matters. apilayer’s extensive API marketplace enables developers to compose powerful AI workflows from trusted data sources.

Common Mistakes to Avoid

When building APIs for AI agents, avoid:

- Vague endpoint naming

- Unstructured responses

- Inconsistent error formats

- Treating AI agents like traditional users

- Ignoring monitoring and analytics

APIs built without AI in mind may work initially but will struggle as automation scales.

Future-Proofing APIs for the AI-First Web

The future of APIs is collaborative. As AI agents become multimodal and more autonomous, APIs will evolve into adaptive interfaces that support reasoning, planning, and execution.

Forward-thinking developers should:

- Design APIs with extensibility in mind

- Embrace schema-driven development

- Monitor emerging agent protocols

- Choose API providers that prioritize reliability and clarity

Platforms like apilayer are already setting this standard by offering scalable, AI-ready APIs trusted by developers worldwide.

Frequently Asked Questions

What are AI agents, and how do they use APIs?

AI agents are autonomous systems powered by LLMs that use APIs as tools to fetch data, perform actions, and make decisions without human intervention.

Do I need to redesign existing APIs for AI agents?

Not always, but adapting them with clearer schemas, structured responses, and descriptive endpoints significantly improves AI compatibility.

Why is apilayer suitable for AI-driven applications?

Apilayer provides reliable, well-documented, and scalable APIs that are easy for both developers and AI agents to consume.

How many APIs can an AI agent use at once?

AI agents can orchestrate multiple APIs in a single workflow, making consistency and reliability critical.

Build AI-Ready Applications with apilayer

If you’re building applications for the LLM era, your APIs matter more than ever.

apilayer offers one of the world’s leading API marketplaces, trusted, scalable, and designed for modern automation.

👉 Explore powerful, AI-ready APIs today at https://apilayer.com/

👉 Build smarter integrations.

👉 Power the next generation of AI agents with confidence.